Mushi is a project designed to generate unique, self-animating paintings with possibilities for user interaction and collaboration. Created in Processing, the program utilizes relatively simple procedural animation techniques in order to create a complex, self-designing artwork without being overly cumbersome.

So far, the work created with this project has been largely non-representational, so I wanted to take a different approach.

For this experiment, I have altered the code in two ways to make it recreate the image but with the unique painterly look. Firstly, the brushes actually move in response to the relative light or darkness of the part of the image they are passing over, causing a change in texture to occur. Additionally, based on the relative lightness of the image, the color of the brushstroke may switch to it's opposite, in order to give the image visual contrast.

Notably, when creating the complementary color scheme, I used RYB (Red-Yellow-Blue, the primary colors and basis of art color theory) as opposed to RGB (Red-Green-Blue, computer pixel colors), which allowed the result to appear more "painterly" to the human eye. For example, based on a RGB color wheel, the complement of yellow would be blue and the complement of red would be cyan, whereas in the RYB color wheel, the complement of yellow is purple and the complement of red is green.

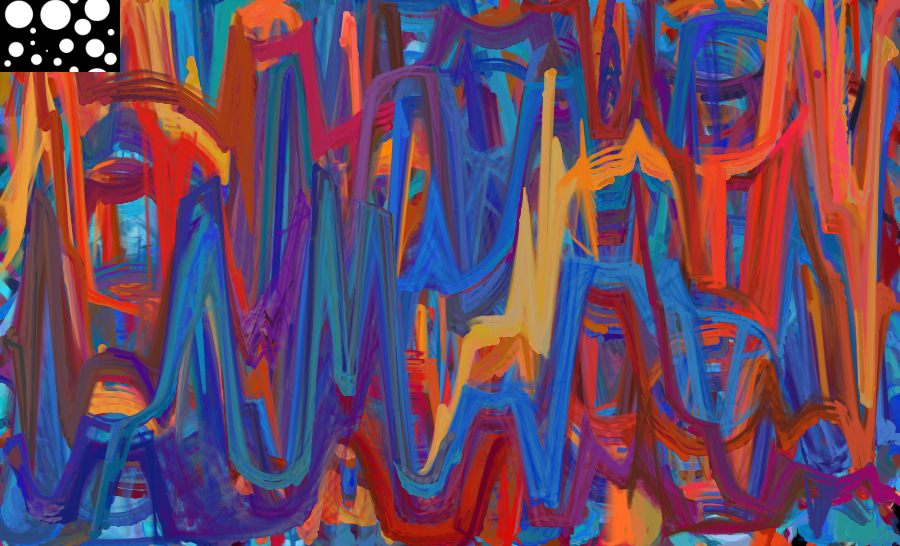

For fun, I've also been looking into other types of programmatic distortions that can be used in combination with the brush strokes to create some neat effects. The spiral distortion rotates the position of the brush around a point based on how close it is to that point. They are most interesting in when multiple spirals are used at once.

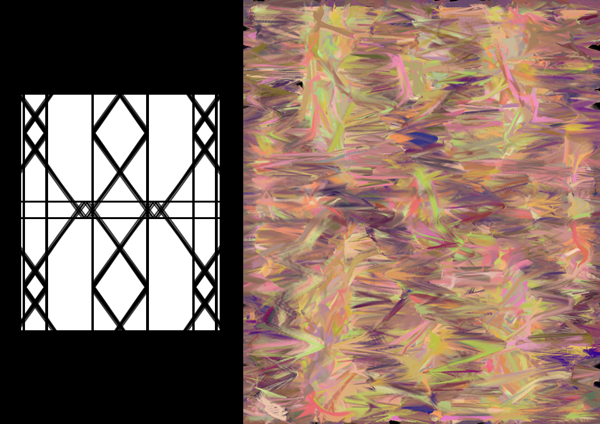

Another experiment is based on lens distortion (both concave and convex lenses have been tested), and has expanded into general glass distortions, such as brick glass or glass art.

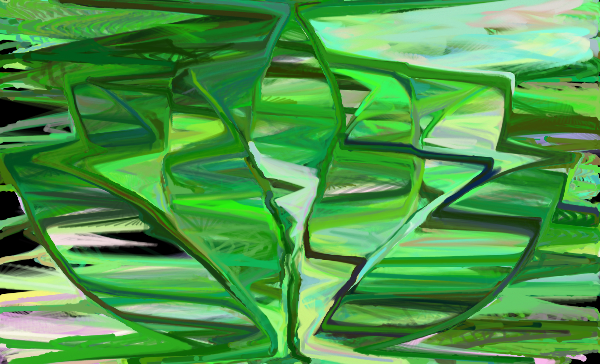

below: distortion based on vector shapes

The lens distortion can only work for vector shapes, so I wanted to find an alternative way that could use black and white raster images as the basis of the distortion.

What I came up with was a means to create a distortion based on "stretching" the canvas over the source image.

To visualize this, I imagined the black and white source image as a mountain, with the lighter points being the peaks and the dark points the valleys. The canvas is then stretched over the entire landscape, and the brushstrokes are drawn where they would appear to be if the landscape was being viewed from above.

This stretching can be done horizontally, vertically, or both.

Animations can be used as the basis for the canvas. However, this does require the "stretching" to be redone for every frame, which could cause the animation to stall or slow down if the source image is too large.

Because the brushstrokes only draw over part of the canvas at a time, the images based on animation will also be less clear since it is based on several frames of animation overlapping each other.

The source images can also be used as a means to control which parts of the canvas is being drawn. In this case, if the particular area of the source image is dark, the program does not draw the brushstroke in that segment. The result is that only the light areas (in IR images, the objects closest to the camera) are drawn in.

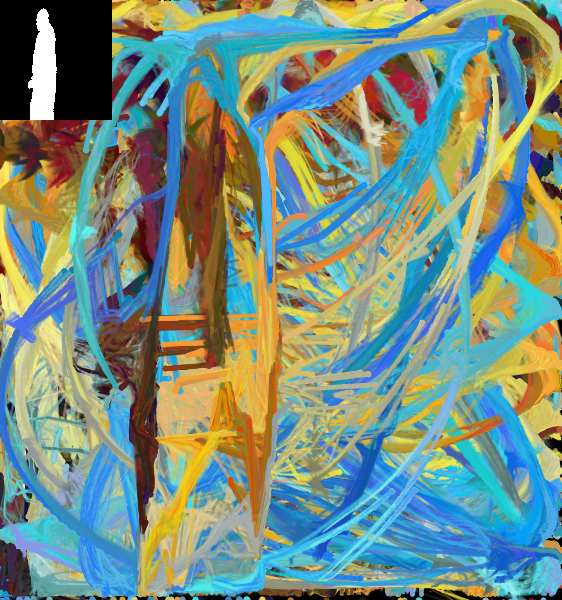

I was fortunate enough to work in collaboration with Sudarshan Prashanth (Prash) recently. Prash has a lot of experience working with Max, a platform useful for real-time video and sound analysis, and the kinect.

Together, we developed a version of Mushi-Distortion that uses kinect input to control the position and color-intensity of the brushstrokes.

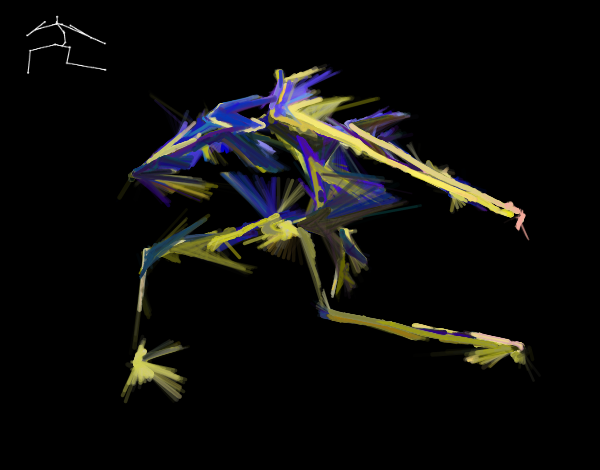

below: early tests of drawing person based on animation taken by kinect

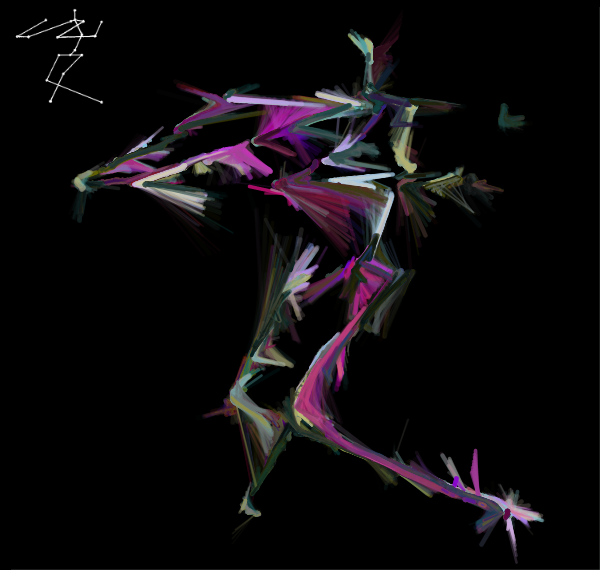

below: drawing from kinect with background subtraction

below: test with color-intensity changing based on movement registered on kinect

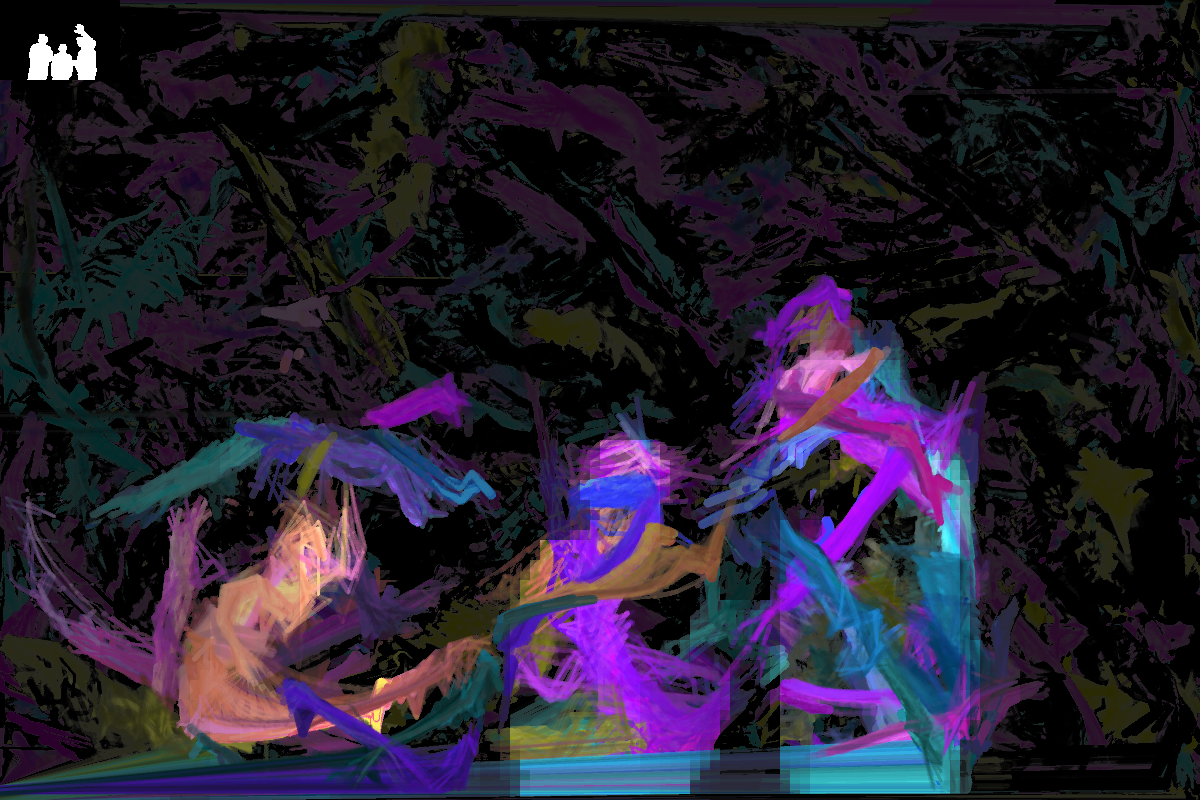

below: screenshots taken during the AME Fall 2015 showcase

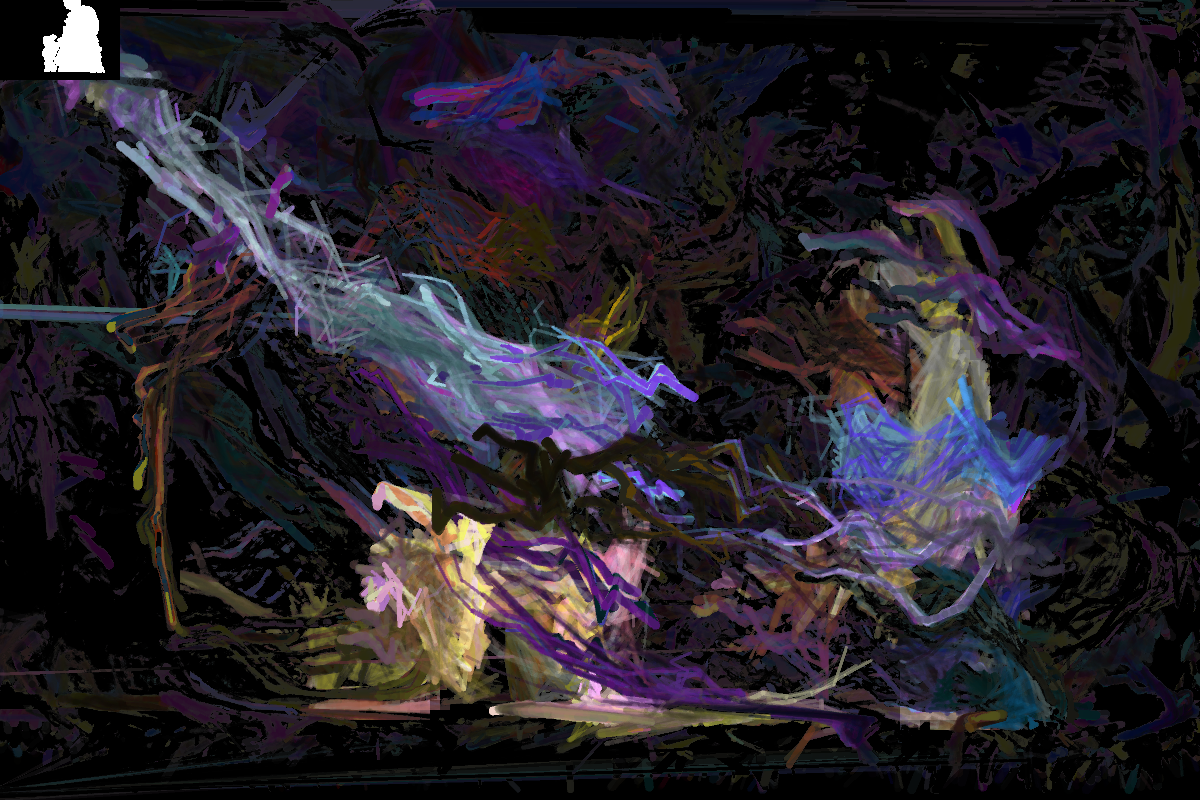

Varsha Iyengar has been working with mocap technology to determine common dancing poses (poses that dancers return to inbetween moves). We have combined her wireframe models with my code in order to better convey the energy and movement of dancing in a still image.